Karsten Maurer – Iowa State University

In this post, I provide my opinion on whether or not we should teach the bootstrap in introductory statistics courses. I think this question is best answered in two parts: (1) can introductory students generally understand bootstrapping concepts and (2) is the additional bootstrapping material beneficial for student learning. The first component is effectively questioning “can we?” which is necessary before we try to answer the question, “should we?” My short answer to both of these is an emphatic, yes! We can and should teach the bootstrap in introductory statistics courses. My slightly longer answer follows in the remainder of this post.[pullquote]My short answer … is an emphatic, yes! [/pullquote]

First I will provide a bit of background on my classroom experience with this topic. I am a graduate student instructor at Iowa State University and have had experience teaching introductory statistics with and without bootstrapping. In my first few years as an instructor, I taught several sections of Stat 104, an introductory statistics course designed for agricultural and biological science students. For the first year, I followed the traditional approach to teaching statistical inference based on the GAISE principles, in coordination with other sections of Stat 104. During the summer after that first year, I attended a randomization/simulation-based inference teaching workshop run by the authors of the Introduction to Statistical Investigation textbook. The workshops were quite motivating and convincing, and in the following year I modified the curriculum for my Stat 104 sections to use the first few weeks of the inference unit to introduce confidence intervals and hypothesis tests using simulation-based methods.

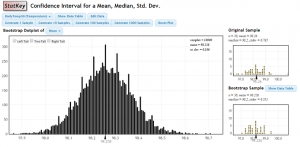

As there are several ways that bootstrapping could be incorporated into an inference curriculum, I will briefly elaborate on how bootstrapping was used for my course. We used the standard deviation of the bootstrap distribution as an estimate for the standard error in constructing approximate 95% confidence intervals (with as the margin of error). This approach to using the bootstrap was chosen over using basic or percentile bootstrap intervals because it led more naturally into the normal-based methods (where students learn the theoretical justification for why the construction leads to 95% confidence) that followed a few weeks later in the inference unit.

In my experience, students were generally able understand the process of bootstrapping very well. The process of resampling from the original sample seems to be much better understood than the concepts related to sampling distributions. I believe this is because the bootstrapping process is concrete, you literally have the data that you are going to repeatedly sample from; whereas, sampling distributions are fundamentally abstract because it is extremely rare to actually repeatedly sample from a population. The concrete nature of the process provides the opportunity to teach the bootstrap by conducting resampling on data collected in the classroom.  For example, we randomly selected a sample of 10 students from our class and recorded how many siblings each of these students had. Students were then able to create bootstrap samples through physical (using a 10-sided die) and computer resampling methods. As with learning most complex subjects, students faced some hang-ups with the bootstrap. The most common issues were confusion with distributional terminology (i.e., variability), and the inability to differentiate between the sample size and the number of bootstrap samples. In the end however, the grand majority of students were able to learn how bootstrapping is conducted and how to use the resulting bootstrap distribution to create confidence intervals.

For example, we randomly selected a sample of 10 students from our class and recorded how many siblings each of these students had. Students were then able to create bootstrap samples through physical (using a 10-sided die) and computer resampling methods. As with learning most complex subjects, students faced some hang-ups with the bootstrap. The most common issues were confusion with distributional terminology (i.e., variability), and the inability to differentiate between the sample size and the number of bootstrap samples. In the end however, the grand majority of students were able to learn how bootstrapping is conducted and how to use the resulting bootstrap distribution to create confidence intervals.

As I am now convinced that introductory statistics students can learn the bootstrap, I feel that the question of whether they should learn the bootstrap revolves around whether it leads to stronger learning outcomes. During one of the semesters of teaching Stat 104, I conducted a study to compare the learning outcomes for students under the traditional and simulation-based inference curricula in a designed experiment. Dennis Lock (my co-investigator) and I were able to randomly assign students from two sections of Stat 104 to the two curricula through creative room scheduling and co-teaching. We found that student learning outcomes with respect to confidence intervals were significantly improved by the simulation-based curriculum, which introduced confidence intervals through bootstrapping. [pullquote]We found that student learning outcomes with respect to confidence intervals were significantly improved by the simulation-based curriculum, which introduced confidence intervals through bootstrapping. [/pullquote]Specifically, we measured student learning using the ARTIST question sets and found a 7% improvement (.7 points out of 10 after controlling for a few pre-treatment variables) in learning outcomes related to confidence intervals for students of the simulation-based curriculum over the traditional curriculum. This study is in the process of review for publication in TISE and will hopefully be available shortly after this posting.

My opinion that students in an introductory statistics courses can and should learn the bootstrap is formed through personal experience and through evidence of efficacy. Teaching the bootstrap didn’t just expose my introductory statistics students to a cool new inference approach, but also helped to improve their overall understanding of fundamental inference concepts.

Pingback: Why we aren’t bootstrapping yet | Simulation-based statistical inference