Chance News 90

Quotations

“Early on election day, in two tight, tucked-away rooms at Obama headquarters …, the campaign's data-crunching team awaited the nation's first results, from Dixville Notch, a New Hampshire hamlet that traditionally votes at midnight.

“Dixville Notch split 5-5. It did not seem an auspicious outcome for the president.

[But t]heir model had gotten it right, predicting that about 50% of the village's voters were likely to support President Obama. …. And as the night wore on, swing state after swing state came in with results that were very close to the model's prediction. ….

“To build the ‘support model,’ the campaign in 2011 made thousands of calls to voters — 5,000 to 10,000 in individual states, tens of thousands nationwide — to find out whether they backed the president. Then it analyzed what those voters had in common. More than 80 different pieces of information were factored in — including age, gender, voting history, home ownership and magazine subscriptions.”

Los Angeles Times, November 13, 2012

Submitted by Margaret Cibes

"Work. The best remedy for illness. One more reason why a person should never retire. The death rate among retired people is horrendous."

(It's not a Forsooth, because Garrison Keillor knows...)

Submitted by Jeanne Albert

Forsooth

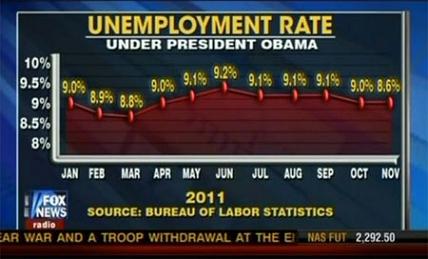

Look at the slope for changes of unemployment rate early in 2011 versus the change in slope for October to November.

Source: http://freethoughtblogs.com/lousycanuck/files/2011/12/121212_fox.jpg

This graphic is discussed at the [Freethought blogs] and at the [Simply Statistics blog]

Submitted by Steve Simon

“I wonder if when you [Nate Silver] get up in the morning you open your kitchen cabinet and go, I’m feeling 18.5% Rice Chex and 27.9% Frosted Mini-Wheats and 32% one of those whole-grain Kashi cereals .... And then I wonder if you think, But I’m really feeling 58.3% like having a cupcake for breakfast ....”

The New Yorker, November 19, 2012

Submitted by Margaret Cibes

The signal and the noise

The big winner in the 2012 election was not Barack Obama. It was Nate Silver, the statistics wunderkind of the fivethirtyeight.com blog. Do not be surprised if he is Time Magazine’s 2012 Man (Person? Geek? Nerd?) of the Year. Just before the 2012 election took place this is what Stephen Colbert in his role as a right-wing megalomaniac mockingly said about Silver’s ability to predict election outcomes:

Yes. This race is razor tight. That means no margin for error, or correct use of metaphor. I mean, it's banana up for grabs. But folks, every prediction out there needs a pooper. In this case, New York Times polling Jedi Nate Silver, who in 2008 correctly predicted 49 out of 50 states. But, you know what they say. Even a stopped clock is right 98% of the time.

See, Silver's got a computer model that uses mumbo jumbo like "weighted polling average", "trendline adjustment", and "linear regression analysis", but ignores proven methodologies like flag-pin size, handshake strength, and intensity of debate glare.

While the gut feel of the “punditocracy” was certain the race would be very tight or that Romney would win in a landslide, Silver’s model based on his weighted averaging evaluation of the extensive polling, predicted the outcome (popular vote and electoral college vote) almost exactly. Here is a listing of what Silver and others predicted. The Washington Post had this description of Silver's achievement:

...I believe people are seriously misstating what Silver achieved. It isn’t that he predicted the election right where others botched it. It’s that he popularized a way of thinking about polling, a way to navigate through conflicting numbers and speculation, that would still have remained invaluable even if he’d predicted the outcome wrong.

Many liberals relied exclusively on Silver. But his model was only one of a number of polling trackers that were all worth consulting throughout — including Real Clear Politics, TPM, and HuffPollster — that were doing roughly the same thing: tracking averages of state polls.

The election results have triggered soul-searching among pollsters, particularly those who got it wrong. But the failure of some polls to get it right doesn’t tell us anything we didn’t know before the election. Silver’s approach — and that of other modelers — has always been based on the idea that individual polls will inevitably be wrong.

Silver’s accomplishment was to popularize tools enabling you to navigate the unavoidable reality that some individual polls will necessarily be off, thanks to methodology or chance. People keep saying Silver got it right because the polls did. But that’s not really true. The polling averages got it right.

Clearly, Silver never sleeps because all the while he was pumping out simulations of the presidential and US senate races, he published just before the election an amazing book, The Signal and the Noise: Why So Many Predictions Fail--but Some Don’t. The reviews are glowingly positive as befits his track record. For instance, as Noam Scheiber put it, “Nate Silver has lived a preposterously interesting life…It’s largely about evaluating predictions in a variety of fields, from finance to weather to epidemiology…Silver’s volume is more like an engagingly written user’s manual, with forays into topics like dynamic nonlinear systems (the guts of chaos theory) and Bayes’s theorem (a tool for figuring out how likely a particular hunch is right in light of the evidence we observe).”

See also this review of the the book by John Allen Paulos.

Discussion

1. The above quotation from Scheiber failed to mention some other fascinating statistical prediction topics in the book: chess, poker, politics, basketball, earthquakes, flu outbreaks, cancer detection, terrorism and of course, baseball--Silver’s first success story. By all means, read the book which is both scholarly (56 pages of end notes) and breezy. However, because the book is so USA oriented, it may well be opaque to anyone outside of North America.

2. The above link from the Washington Post has Silver claiming 332 electoral votes for Obama and 203 [misprint, should be 206] for Romney which turns out to be the exact result. However, on Silver’s blog itself, Obama gets only 313 electoral votes and Romney gets 225. Explain the discrepancy. Hint: Look at Silver’s prediction for Florida.

3. The above link from the Washington Post indicates that several other poll aggregators using similar methodology were just as accurate as Silver. Speculate as to why they are less celebrated?

4. Silver also predicted the outcome of the U.S. Senate races. In fact, while he got all the others right, he was quite wrong in one of them and spectacularly wrong in another. Which two were they? Speculate as to why Silver was less successful predicting the Senate races than he was on the presidential race.

5. Silver’s use of averaging to improve a forecast has a long history in statistics. There exists a famous example of Francis Galton of over 100 years ago:

In 1906, visiting a livestock fair, he stumbled upon an intriguing contest. An ox was on display, and the villagers were invited to guess the animal's weight after it was slaughtered and dressed. Nearly 800 participated, but not one person hit the exact mark: 1,198 pounds. Galton stated that "the middlemost estimate expresses the vox populi, every other estimate being condemned as too low or too high by a majority of the voters", and calculated this value (in modern terminology, the median) as 1,207 pounds. To his surprise, this was within 0.8% of the weight measured by the judges. Soon afterwards, he acknowledged that the mean of the guesses, at 1,197 pounds, was even more accurate.

Presumably, those 800 hundred villagers in 1906 knew something about oxen and pounds. Suppose Galton had asked the villagers to guess the number of chromosomes of the ox. Why in this case would averaging likely to be useless?

6. Suppose instead, Galton had asked the villagers to come up with a number for the (putatively) famous issue of the medieval era: “How many angels can dance on the head of a pin?” Why is this different from inquiring about the weight of an ox or its number of chromosomes?

7. Although Silver devotes many pages to the volatility of the stock market, he barely mentions (only in the footnote on page 368) Nassim Taleb and his “black swans.” Rather than black swans and fractals, Silver invokes the power-law distribution to explain “very occasional but very large swings up or down” in the stock market and the frequency of earthquakes. For more on the power-law distribution, see this interesting Wikipedia article.

8. One of the lessons of the book is that in order to predict a specific phenomenon successfully is that there needs to be a data rich environment. Therefore, ironically, weather forecasting is, so to speak, on much firmer ground than earthquake forecasting.

9. Another lesson of the book is that when it comes to the game of poker, now that most of the poor players have left the scene, it is easier to make money by owning the house than being a participant. Knowledge of Bayes theorem can only go so far.

Submitted by Paul Alper

Clinical trials need to be adapted to the Mayan calendar

The Mayan Doomsday’s effect on survival outcomes in clinical trials Paul Whetley-Price, Brian Hutton, Mark Clemons. CMAJ December 11, 2012 vol. 184 no. 18 doi: 10.1503/cmaj.121616.

Will the world end when the Mayan calendar runs out on December 21, 2012? If so, we need to prepare.

Such an event would undoubtedly affect population survival and, thus, survival outcomes in clinical trials. Here, we discuss how the outcomes of clinical trials may be affected by the extinction of all mankind and recommend appropriate changes to their conduct.

This paper presents a Kaplan-Meier curve illustrating the effect of extinction of humankind, along with the gradual zombie repopulation.

The authors go on to note that extinction will likely mask any mortality difference between two arms of a clinical trial and that it will make the recording of adverse event data impossible.

Questions

1. If you are a member of a DSMB monitoring a clinical trial, and the world ends, would that be sufficient grounds for stopping the trial early, or would you continue the trial to the planned endpoint in order to preserve the Type I error rate?

2. Is death due to apocalypse considered an unexpected adverse event? If so, how quickly does it need to be reported?