Chance News 9: Difference between revisions

| Line 98: | Line 98: | ||

[http://www.economist.com/World/europe/displayStory.cfm?story_id=5115147&tranMode=none Problems with measures of government performance and productivity], The Economist, Nov 3rd 2005.<br> | [http://www.economist.com/World/europe/displayStory.cfm?story_id=5115147&tranMode=none Problems with measures of government performance and productivity], The Economist, Nov 3rd 2005.<br> | ||

[http://www.statscom.org.uk/media_pdfs/reports/PSAreport%2BAnnexA.pdf PSA targets: the devil in the detail], (UK) Statistics Commission, October 2005 - you can [mailto:Allen.Ritchie@Statscom.org.uk | [http://www.statscom.org.uk/media_pdfs/reports/PSAreport%2BAnnexA.pdf PSA targets: the devil in the detail], (UK) Statistics Commission, October 2005 - you can [mailto:Allen.Ritchie@Statscom.org.uk comment of the report] up to the end of this month (Nov 2005). | ||

A recent report from the Statistics Commission, an independent body that monitors UK official statistics, | A recent report from the Statistics Commission, an independent body that monitors UK official statistics, | ||

Revision as of 16:12, 16 November 2005

Quotation

Mathematics is not an opinion.

Anonymous.

Forsooth

Here are some Forsooth items from the October issue of RSS News.

But a good degree can make all the difference..to the prospect of actually being able to pay off that massive student debt-- the majority of students now graduate owing a crushing 13,501 pounds.

23 April 2005

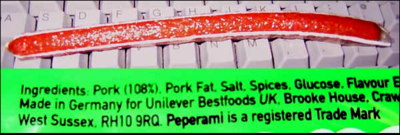

The RRS Forsooth also included picture of a Peperami sausage label that started their ingredients with Pork (108%).

The Blogs had great fun with this forsooth. Benbryves a member of Game Dev.net gave the following picture from the back of the Peperamii sausage that he bought a Sainsbury's supermarket

Droid Young on the Team Phoenix Rising Forums website said that he wrote to the company and got the following answer:

Dear Mr Young

Thank you for your enquiry.

Peperami is a salted and cured spicy meat snack.

The weight of the raw meat going into Peperami exceeds the weight of the end product because the recipe loses moisture, and therefore loses weight, during the fermentation, drying and smoking process.

Please contact us again if we can be of any further assistance.

Kind regards

DISCUSSION:

Does the company answer seem reasonable to you?

Supreme Court Nominee Alito: Statistics Misleading?

An article in Salon.com for October 31 discusses the case Riley v. Taylor. The plaintiff Riley, convicted at trial of first-degree murder, was African-American; at the trial, the prosecution used its peremptory challenges to eliminate all three of the African-Americans on the jury panel. In the same county that year, there were three other first-degree murder trials, and in every one of those cases all of the African-American jurors were struck.

Riley appealed his conviction. A majority of the judges on the appeals court thought that there was evidence that jurors were struck for racial reasons. According to them, a simple calculation indicates that there should have been five African-American jurors amongst the forty-eight that were empanelled in the four cases. However, there were none. To these judges, this was clear evidence of racial motivation in the striking of such jurors.

Judge Alito dissented. He called the majority's analysis simplistic, and stated that although only 10% of the U.S. population is left-handed, five of the last six people elected president of the United States were left-handed. He asked rhetorically whether this indicated bias against right-handers amongst the U.S. electorate.

The majority responded that there is no provision in the Constitution that protects persons from discrimination based on whether they are right-handed or left-handed, and that to compare these cases with the handedness of presidents ignores the history of racial discrimination in the United States.

Questions

1) Assuming that Judge Alito is correct about the proportion of left-handers in the U.S. population, what is the probability that five of the last six presidents elected would be left-handed?

2) Is Judge Alito's comparison biased by the fact that he chose just the last six presidents? Presumably he believed that the president just before this group was right-handed, otherwise he would have included him in the sample and said "six of the last seven presidents." What is the relevant statistic?

3) When the decision came down in 2001, the last six people elected president were George W. Bush, Clinton, George H. W. Bush, Reagan, Carter and Nixon. Of these, Clinton and George H. W. Bush were left-handed. Ford, who was left-handed, was not elected president (or even vice-president); he became president upon the resignation of Richard Nixon. Reagan may have been left-handed as a child, but he wrote right-handed so his case isn't clear; the Reagan Presidential Library says that he was "generally right-handed." Judge Alito may have been confused, including on his list Ford (who was not elected) and George W. Bush (who is not left-handed, although his father is), as well as Reagan. The other left-handed presidents were Truman and Garfield. Hoover is found on some lists of left-handed presidents, but according to the Hoover Institution, he was not left-handed. How does this information affect Alito's argument?

4) Suppose that 5/48 of the jury pool were African-American. What is the probability that no juror amongst the 48 selected would have been African-American? How does this compare with the actual statistics of left-handed presidents? Does the same objection apply to this case as might apply to Alito's example?

5) What is your opinion? Is there clear statistical evidence of racial bias in the use of peremptory challenges in this county?

6) What does it say about our judiciary that Judge Alito could get his facts as wrong as he did, and that none of the judges in the majority caught the errors?

Contributed by Bill Jefferys

Who wants Airbags

Who wants Airbags?

Chance Magazine, Spring 2005

Mary C. Meyer and Tremik Finney

This article is available from the Chance Magazine web site under "Feature Articles". The data for this study is available http://www.stat.uga.edu/~mmeyer/airbags.htm here.

Here is the author's description of their study.

Airbags are known to save lives. Airbags are also known to kill people. It is widely believed that the balance is in favor of airbags. Government and private studies have shown a statistically significant beneficial effect of presence of airbags on the probability of surviving an accident. However, our study suggests the opposite: that airbags have been killing more people than they have been saving.

The National Highway Traffic Safety Administration (NHTSA) keeps track of deaths due to airbags; you can find a list of deaths on the NHTSA web site, along with conditions under which these deaths occurred. Each death occurred in a low speed collision, and for each, there is no other possible cause of death. Is it reasonable to assume that airbags can kill people only at low speeds? Isn't it more likely that airbags also kill people at higher speeds, but the death may be attributed to the crash? In this study we compare fatality rates for occupants with airbags available to fatality rates for occupants without airbags, controlling for possible confounding factors such as seatbelt use, impact speed, and direction of impact. What effect does the presence of an airbag have on the probability of death in a crash, under various conditions?

The main difference between our study and the previous studies is the choice of the dataset. We use the NASS CDS database, which is a stratified random sample of crashes nationwide. Previous studies showing beneficial effects of airbags have all used the FARS database, which contains data for all crashes in which a fatality occurred. We can limit our analyses to a subset of the NASS CDS database, choosing only crashes where there was at least one fatality; this should be a random sample of the FARS database. When we perform the analyses on this subsample, we can reproduce the results of previous studies: in accidents in which a fatality occurs, airbags are beneficial. However, for the entire random sample of crashes, they increase rather than decrease the probability of death.

Here is an analogy to help understand this: If you look at people who have cancer, radiation treatment will improve their probability of survival. However, radiation treatment is dangerous and can actually cause cancer. Making everyone in the country have airbags and measuring effectiveness only in the fatality group, is like making everyone have radiation treatment and looking only at the cancer group to check efficacy. Within the cancer group, radiation will be found to be effective, but there will be more deaths on the whole.

This is what seems to be happening with airbags. In a severe accident, airbags can save lives. However, they are inherently dangerous and pose a risk to the occupant. Our analyses show that in lower-speed crashes, the occupant is significantly more likely to die with an airbag than without. This effect is not seen in the analysis using FARS, because this database does not contain information about low-speed crashes without deaths.

Our analysis shows that previous estimates of airbag effectiveness are flawed, because a limited database was used. We have demonstrated this by reproducing their results, using a subsample of the CDS data. The new estimates of effectiveness suggest that airbags are not the lifesaving devices we have believed them to be.

Of course one should ask what the National Traffic Administration thinks of this. The Journal asked them to respond and they did so. Their response by Charles Kahane was that the FARS data should be used since the CDC data that the authors used was too small to obtain significant results.

The authors of the Chance Magazine article replied that they were able to get significant results using the CDC data and the usiing the FARS data would be answering the wrong question. They write:

If a front-seat occupant wishes to ask the question, "If I get in an accident, am I less likely or mare likely to die, if I have an airbag?" The proper way to answer this question is with the CDS dataset. Withe the FARS dataset, the question one can answer is. "If I get in a highway accident in which there is at least one fatality, am I less likely or more likely to die, if I have an airbag?

The article itself does not include this exchange but you can read it here.

This would be a great article to discuss in a statistics class. The authors clearly explain the methods they used and why they used them. Since they have provided the data for their students students could use this for their own analysis.

Submitted by Laurie Snell

Pick a number, any number

Problems with measures of government performance and productivity, The Economist, Nov 3rd 2005.

PSA targets: the devil in the detail, (UK) Statistics Commission, October 2005 - you can comment of the report up to the end of this month (Nov 2005).

A recent report from the Statistics Commission, an independent body that monitors UK official statistics, claims that many Government performance targets are seriously flawed. These Public Service Agreement (PSA) targets by, and for, government departments are intended to measure the desired outcomes from Government policies, in particular spending policies. There are a total of 102 separate PSA targets that were set in the 2004 Spending Review.

Some were critisised for being incomprehensibly complex, such as the 23 separate indicators for British research innovation performance, each with its own target and four milestones. The report says some targets would be missed if a single case falls below a given threshold. For example, if even one school out of 3,400 fails to bring half its pupils up to scratch in each of English, maths and science, then that target would be missed. In other cases, the necessary data doesn't exist.

A frequently-voiced criticism of the targets associated with previous spending reviews was that many had been defined without sufficient consideration of the Government’s ability to measure performance against them. That is, attempts to quantify some aspirational targets lead to the creation of targets that seems artifical or forced.

It is however obvious that the availability, credibility and validity of data indicating progress against these targets is fundamental to trust in the integrity of the target-setting process. The Commission is therefore concerned to assess whether the statistical evidence to support PSAs is adequate. The question of whether the statistical underpinnings are adequate can only be answered ‘bottom up’ by reviewing each and every target.

In view of the importance of PSA targets, the Commission recommends that more consideration should be given to the adequacy of the statistical infrastructure to support their future evolution.

What is needed is a robust cross-government planning system for official statistics that can pick up the future data requirements (to support the setting of targets) at the earliest possible stage and feed those effectively into the allocation of departmental resources.

The Commission recommends that government departments should pay more attention to data quality issues. It quotes The National Audit Office which says

the allocation of clear responsibility for data quality and active management oversight of data systems would reinforce the importance of data quality.

The Royal Statistical Society has produced a report which comments on aspects of the PSA targets.

Further reading

Performance Indicators: Good, Bad and Ugly, Royal Statistical Society - Working Party on Performance Monitoring in the Public Services, which stresses that performance monitoring when done badly can be very costly, not merely ineffective but harmful and indeed destructive.

Submitted by John Gavin.

A roulette winner

Judge rules for Madrid gambler

The Guardian, June 24, 2004

Ben Sills in Granada

Court backs gambler

The Age Madrid, June 24,2004

Since 1990 Gonzalo Garcia-Pelayo with the help of friends and relatives made a statistical study of the numbers winning numbers in roulette in the Casino Gran Madrid. He found that some numbers were winning numbers as often as once in every 28 throws apparently due to imperfections in the wheel, floors not being level, irregularly sized ball slots etc.

The article reports that Gonzalo used his analysis to win more than a million euros during a two year run. From another article we learn that Gonzalo was denied entry to the casino in 1992 but the government in 1994 overruled the casino. The casino they tried to get the courts to uphold their right to not let Gonzalo enter the casino. For ten years the case went through the courts and in 2004 Spain's Supreme Court ruled against the casino.

According to the The Age article:

The Supreme Court ruling said Garcia-Pelayo and company used "ingenuity and computer techniques. ˇThat's all."

The Guardian reports:

But despite this week's ruling he has no plans to return to Madrid's roulette tables. "I'm too well known," he said. Instead he plans to sue the casino for 1.2 million euros in lost earnings.

Gonzalo's story was the subject of a History Channel program and is available from their website as a DVD.

This was a part of the History Channel's "Breaking Vegas" series of 13 programs, which tell the stories of Ed Thorp, the MIT GROUP, and others who have tried to beat the casinos. You can read a review of this series here.