Chance News 15: Difference between revisions

| Line 160: | Line 160: | ||

Assume that, based on this information, we make a model for the banker's offers. How would you determine the optional stopping rule? | Assume that, based on this information, we make a model for the banker's offers. How would you determine the optional stopping rule? | ||

Submited by Laurie Snell | Submited by Laurie Snell | ||

Revision as of 14:18, 12 March 2006

Quotation

Take statistics. Sorry, but you'll find later in life that it's handy to know what a standard deviation is.

New York Times, March 2, 2006

This appears on a list of core knowledge that Brooks says will be sufficient to give you a great education even if you don't make Harvard.

Forsooth

From the February 2006 RSS news we have:

One primary school in East London has a catchment area of 110 metres.

23 October 2005

More on medical studies that conflict with previous studies

Humans, being what they are, it is only natural that when a study's consequences seem plausible there is no need to look too closely. On the other hand, when the outcomes go against what was expected, a great deal of inspection is called for. This was discussed here.

The Wall Street Journal of February 28, 2006 details possible reasons for why the Women's Health Initiative might have had design flaws leading to "murky results." In summary, the WSJ reported:

- Calcium/Vitamin D study

- Message: Supplements don't protect bones or cut risk of colorectal cancer.

- Problem: Those in placebo group also took supplements in many cases.

- Message: Supplements don't protect bones or cut risk of colorectal cancer.

- Low-fat diet study:

- Message: Doesn't cut risk of breast cancer.

- Problem: Few met the fat goal.

- A 22% drop in risk for women who cut fat the most got little emphasis.

- Message: Doesn't cut risk of breast cancer.

- Hormone study:

- Message: No benefit, possible increased cancer and heart risk.

- Problem: Most in study were too old for this to apply to menopausal women.

- Message: No benefit, possible increased cancer and heart risk.

More generally, according to the WSJ, "Design problems in all of the trials mean the results don't really answer the questions they were supposed to address. And a flawed communications effort led to widespread misinterpretation of results by the news media and public."

In particular, in order to reduce the number of participants for the studies, "more than half [of the women] took part in at least two of them, and more that 5,000 were in all three trials." As might be imagined, "Among problems this posed was simple burnout" which "contributed to compliance problems that plagued all three and hurt the reliability of their results."

Another problem was the difficulty of double blinding for the hormone study since any hot flashes would indicate to the patient (and to her physician) that she was in the placebo arm; to get around this impediment, the vast majority of the women recruited were well past menopause, thus biasing the results against the benefits of hormone replacement.

So where are we after 68,132 female participants, "fifteen years and $725 million later"? More than likely, the Women's Health Initiative study will be in and out of the news for some time to come because of its ambiguity.

Submitted by Paul Alper

Economists analyze the tv show "Deal or No Deal?"

Why game shows have economists glued to their TVs

Wall Street Journal, Jan. 12, 2006

Charles Forelle

Economists Learn from Game Show 'Deal or No Deal'

NPR, March 3, 2006, All things considered

David Kestenbaum

Deal or No Deal? Decision making under risk in a large-payoff game show

Thierry Post, Martijn Van Den Assem, Guido Baltussen, Richard H. Thaler

February 2006

The authors of the research paper write:

The popular television game show "Deal or No Deal" offers a unique opportunity for analyzing decision making under risk: it involves very large and wide-ranging stakes, simple stop-go decisions that require minimal skill, knowledge or strategy and near-certainty about the probability distribution.

Here is a nice description of the game from the MS Math in the Media magazine:

- Twenty-six known amounts of money, ranging from one cent to one million dollars, are (symbolically) randomly placed in 26 numbered, sealed briefcases. The contestant chooses a briefcase. The unknown sum in the briefcase is the contestant's.

- In the first round of play, the contestant chooses 6 of the remaining 25 briefcases to open. Then the "banker" offers to buy the contestant's briefcase for a sum based on its expected value, given the information now at hand, but tweaked sometimes to make the game more interesting. The contestant can accept ("Deal") or opt to continue play ("No Deal").

- If the game continues, 5 more briefcases are opened in the second round, another offer is made, and accepted or refused. If the contestant continues to refuse the banker's offers, subsequent rounds open 4, 3, 2, 1, 1, 1, 1 briefcases until only two are left.

- The banker makes one last offer; the contestant accepts that offer or takes whatever money is in the initially chosen briefcase.

So the banker is always trying to buy out the player. If he fails the player will end up with the amount in his suitcase.

The best way to understand the game is to play it here on the NBC website.

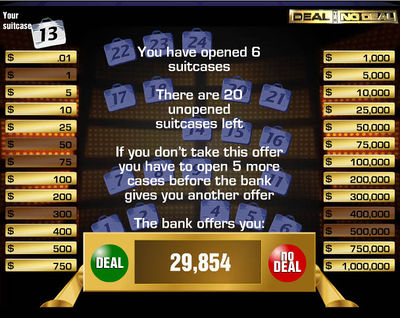

We did this playing the only the first round. We chose number 13 for our briefcase. We were then asked to choose six briefcases. We chose suitcases 3,10,12,13,19, 25. Here is the result of this round:

Our six choices eliminated the amounts 1, 50, 75, 300, 400,000, 500,000. The expected value of our suitcase is now the average of the amounts in the remaining suitcases. Making this calculation We find that this is $125,900 (rounded to the nearest dollar). The banker has offered us $29,854 to quit. So We would have to be pretty risk adverse to accept the banker's offer at this point.

In order to see if the banker's offers get better, we played a second game in which we refused all the banker's offers. With this strategy we start with an expected winning given by the average of the initial amounts which we found to be $131,478.

We then played all 9 rounds. In each round we recalculated the expected amount in our briefcase and recorded the banker's offer but refused it. Here are the results:

Round |

Expected Value |

Offer |

1 |

130,857 |

30565 |

2 |

142,475 |

37361 |

3 |

125,186 |

54507 |

4 |

153,319 |

57554 |

5 |

170,967 |

67160 |

6 |

205,160 |

108950 |

7 |

256,425 |

176781 |

8 |

341,800 |

317700 |

9 |

512,500 |

367500 |

We note that the banker's offer at each round is less than the expected amount in our suitcase. While it seems clear at the beginning we are right to refuse the offer, as we get nearer the end this is not so obvious. For example, after the 8th round the only amounts available were $400, $25,000, and $1,000,000. We are offered $317,700, which is a pretty nice amount of money. If we reject it we would have a 2/3 chance of ending up with a relatively small amount and a 1/3 chance of getting the million dollars. We might well be risk averse at this point and accept the offer. However, we didn't and the suitcase the chose had the $400. Now the only amounts left were $25,000 and $1,000,000. We are now offered $367,500. Again we might think twice about refusing this. But we did refuse it and we opened the last suitcase, which had the million dollars, and so our suitcase had $25,000. If you watch the TV program you will find that most contestants refuse the banker's offers in the early rounds but accept it in one of the later rounds.

Deal or No Deal was introduced in Australia in 2003 and currently airs in 38 countries. Post and his co-authors obtained videos of 53 episodes from Australia and the Netherlands. From these they determined, for each contestant and each round, the situation the contestant faced in terms of the amounts of money in the remaining briefcases, the banker's offer and their decision to accept or refuse the offer. They then analyze this data using a measure of relative risk aversion developed by Arrow and Pratt (RRA). The authors write:

In every game round, a unique RRA coefficient can be determined at which the contestant would be indifferent between accepting and rejecting the bank offer. If the contestant accepts the offer, his RRA must be higher than this value; if the offer is rejected, his RRA must be lower.

They also investigate how this RRA changes according to other variables such as estimated income and education.

The authors find that, for a wealth level of €25,000, an average over all the contestants gives an RRA 1.61. This estimate decreases when the wealth level decreases and increases when it increases. The degree of risk aversion differs strongly across the contestants, some exhibiting strong risk averse behavior (RRA > 5) and others risk seeking behavior (RRA < 0). They say:

The degree of risk aversion differs strongly across the contestants, some exhibiting strong risk averse behavior (RRA > 5) and others risk seeking behavior (RRA< 0). The differences can be explained in large part by the earlier outcomes experienced by the contestants in previous rounds of the game. Most notably, RRA generally decreases following losses. Contestants facing a large reduction in the expected prize during the game even exhibit risk seeking behavior.

DISCUSSION

(1) Suppose that at each round the banker offered you the expected value of the amount in your briefcase and you were only interested in maximizing your expected winning. Would it matter when you stopped?

(2) On the web version, the banker is oviously using an algorithm to determine the amount of money to offer you on a particular round which depends only on information available at the beginning of the round. Professor Post tells us:

The bank behaved in a predictable manner, with offers around 5-10% of expected value in round one and 100% in the later rounds. Also, for losers, the offers are relatively generous, sometimes exceeding 100%.

Assume that, based on this information, we make a model for the banker's offers. How would you determine the optional stopping rule?

Submited by Laurie Snell