Chance News 76

August 10, 2011 to September 6, 2011

Quotations

"Counting is the religion of this generation. It is its hope and its salvation."

Submitted by Steve Simon.

“When the facts change, I change my opinion. What do you do, sir?”

(You can read a review of McGrayne's book by John Allen Paulos in the NYT, 5 August 2011).

Submitted by Bill Peterson

Noting the the US Open tennis tournament is now in full swing, Paul Alper forwarded this excerpt from p. 8 of the book:

Assuming equal probabilities was a pragmatic approach for dealing with uncertain circumstances. The practice was rooted in traditional Christianity and the Roman Catholic Church's ban on usury. In uncertain situations such as annuities or marine insurance policies, all parties were assigned equal shares and divided profits equally. Even prominent mathematicians assigned equal probabilities to gambling odds by assuming with a remarkable lack of realism, that all tennis players or fighting cocks were equally skillful.

"If you want to convince the world that a fish can sense your emotions, only one statistical measure will suffice: the p-value."

Submitted by Paul Alper

"[An economist] suggests that we go easy on statisticians. 'Everyone makes spreadsheet mistakes,' he says. He repeats advice he received from his best man at his wedding: 'The best way to remember your wedding anniversary is to forget it once.' "

The Wall Street Journal, August 20, 2011

Submitted by Margaret Cibes

“In God we trust," Mayor Michael R. Bloomberg is fond of saying, before invariably adding a caveat: "Everyone else, bring data."

But whom is the mayor quoting? "In God we trust, all others bring data" was featured on a T-shirt at the Joint Statistical Meetings! It is usually attributed to W. Edwards Deming. In fact, that is the attribution given in the preface to Elements of Statistical Learning by Trevor Hastie, Robert Tibshirani, and Jerome Friedman (2nd edition, Springer 2009). In a footnote there, we read:

On the Web, this quote has been widely attributed to both Deming and Robert W. Hayden; however Professor Hayden told us that he can claim no credit for this quote, and ironically we could find no 'data' confirming Deming actually said this.

Submitted by Bill Peterson

Forsooth

“My classical metaphor: A Turkey is fed for 1000 days—every day confirms to its statistical department that the human race cares about its welfare 'with increased statistical significance'. On the 1001st day, the turkey has a surprise.”

Edge: The Third Culture, September 15, 2008

Submitted by Margaret Cibes

Dispute over statistics in social network analysis

Catching Obesity From Friends May Not Be So Easy. Gina Kolata, New York Times, August 8, 2011.

Social network analysis has led to some rather intriguing findings about your health.

Does obesity spread like a virus through networks of friends and friends of friends? Do smoking, loneliness, happiness, depression and illegal drug use also proliferate through social networks? Over the past few years, a series of highly publicized studies by two researchers have concluded that these behaviors can be literally contagious — passed from person to person.

These findings, published in the New England Journal of Medicine in 2007 have come under attack by statisticians.

I know that many professional statisticians felt it was all bunk from the word go,” said Russell Lyons, a mathematics professor at Indiana University, who recently published a scathing review. of the work on contagion of social behaviors.

The authors of the 2007 publication used a massive cohort study that had

data gathered from 12,067 subjects in a long-running federal study, the Framingham Heart Study. The data included 32 years of medical records, including such routine data as body weight and smoking habits.

The unique feature of the Framingham study was the request from each participant to name a friend who could help locate them if a follow-up exam was missed. This allowed the researchers to apply social network analysis to this data set and to correlate it to measures of health, such as obesity.

In analyzing the Framingham data, Dr. Christakis and Dr. Fowler found that friends, and friends of friends, had similar levels of obesity, but neighbors did not.

There are several explanations for this finding, which the researchers addressed in their article.

One was homophily, the tendency to choose friends like oneself. A second explanation was that people are affected in the same ways by the environments they share with their friends.

The third explanation, and the one that garnered so much attention, was contagion. Dr. Christakis and Dr. Fowler focused on this as a cause of obesity, saying that they could estimate its effect and that it was large. They theorized that a person’s idea of an acceptable weight, or an acceptable portion size, changes when he sees how big his friends are or how much they eat.

How can you disentangle these three possible mechanisms that might produce a correlation of obesity among friends?

In their first study, for example, Dr. Christakis and Dr. Fowler wrote that if person A names person B as a friend and B does not name A, then B’s weight affects A. If B gets fat, the researchers estimated, then A has a 57 percent change of getting fat as well. But if A gets fat, the researchers said, it has no effect on B.

But a careful statistical analysis noted some problems.

But those estimates did not come from the raw data, Dr. Lyons pointed out. They came from the researchers’ statistical model. And the estimates actually showed that when B did not name A as a friend, B was still 13 percent more likely to become obese.

The difference between 57% and 13% was not large enough to achieve statistical significance. Perhaps, countered the authors, but the results they found were consistent across multiple studies.

Questions

1. Does a result that is not statistically significant, but which is replicated across multiple studies constitute proof of causation?

2. What are the social implications if the results of Christakis and Fowler are true?

Submitted by Steve Simon.

Defining famine

“The Challenge of Drawing the Line on a Famine”

by Carl Bialik, The Wall Street Journal, August 6, 2011

"The Hunger for Reliable Hunger Stats"

by Carl Bialik, The Wall Street Journal, August 5, 2011

Four years ago, a group of humanitarian organizations “devised strict quantitative thresholds for rates of death, malnutrition and food shortages, each of which must be met before a food crisis becomes a famine. Somalia’s famine is the first to officially have cleared these cutoffs since they were embraced by international aid organizations.”

http://si.wsj.net/public/resources/images/NA-BM710A_NUMBG_G_20110805233303.jpg

In the recent Somalia case, local folks went out into “drought-stricken regions, selected a sample of Somalis and asked them about deaths in their households from April to June, then measured children in the households to check their ratio of weight to height.” By the time of this article, five areas had been labeled as being in a state of famine. The chief technical advisor stated:

The difference between 1.94 [the death rate per 10,000 in one Somali region that missed the famine cut] and 2 is nothing, …. But when we say famine we mean it. Nobody will dispute this.

A year ago aid groups were issuing probability estimates of their famine forecasts, hoping to mobilize aid. However, one person warned that “A forecast of a 20% chance of famine, for example, could be ignored because there is an 80% chance none will occur.”

See Chance News 75 for a posting on the related issue of measuring hunger.

Questions

To put into perspective the size of the population affected in Somalia, consider a hypothetical famine or acute malnutrition condition in the U.S. (or even your own state) today. For purposes of simplification, assume (unrealistically) that there is no increase in U.S. population over one year, i.e., ignore the effect of births and immigration.

1. Estimate the number of U.S. residents who would have to die today in order for the country to be considered to be experiencing a famine. Identify the type of geographic region - rural, suburb, city, state - that would correspond in size to your estimate.

2. Repeat for the number of U.S. residents who would have to experience acute malnutrition today, assuming that no one is counted in both the mortality and malnutrition categories.

3. Estimate the total number of U.S. residents who would have died by the end of one year if the rate remained constant. (Note that, while the rate may remain constant over time, absolute mortality counts would change as the base population decreased from day to day.)

4. It is not possible to estimate the total number of U.S. residents who would have experienced acute malnutrition by the end of one year from this data alone. Why not? What additonal information/data would we need?

Submitted by Margaret Cibes

Seeding trials

So to speak, all that glitters is not the gold standard. The gold standard in the medical world, respected by statisticians, medical practitioners and the public alike is the randomized clinical trial. Depending on what tribe of statistics one belongs to, the p-value, the effect size, or the posterior probability would determine whether or not a given treatment is superior to the others and/or a placebo.

But just because something has the appearance of a randomized clinical trial does not mean it is one. As reported by Carl Elliott in the NYT,

LAST month, the Archives of Internal Medicine published a scathing reassessment of a 12-year-old research study of Neurontin [gabapentin], a seizure drug made by Pfizer. The study [often referred to as the STEPS trial], which had included more than 2,700 subjects and was carried out by Parke-Davis (now part of Pfizer), was notable for how poorly it was conducted. The investigators were inexperienced and untrained, and the design of the study was so flawed it generated few if any useful conclusions. Even more alarming, 11 patients in the study died and 73 more experienced “serious adverse events.”

One reason is that the study was not quite what it seemed. It looked like a clinical trial, but as litigation documents have shown, it was actually a marketing device known as a “seeding trial.” The purpose of seeding trials is not to advance research but to make doctors familiar with a new drug.

The headline for Carl Elliott’s article is “Useless Studies, Real Harm.” The “useless” aspect derives from the following: seeding trials typically follow a legitimate clinical trial in which the medication has been approved but is not really very different from competitors. The seeding trial generates publicity and sales because “the doctors gradually get to know the drug, making them more likely to prescribe it later.” According to Wikipedia

A ‘’seeding trial’’ or ‘’marketing trial’’ is a form of marketing, conducted in the name of research, designed to target product sampling towards selected consumers. In medicine, seeding trials are clinical trials or research studies in which the primary objective is to introduce the concept of a particular medical intervention—such as a pharmaceutical drug or medical device—to physicians, rather than to test a scientific hypothesis.

Seeding trials are not illegal, but such practices are considered unethical. The obfuscation of true trial objectives (primarily marketing) prevents the proper establishment of informed consent for patient decisions. Additionally, trial physicians are not informed of the hidden trial objectives, which may include the physicians themselves being intended study subjects (such as in undisclosed evaluations of prescription practices). Seeding trials may also utilize inappropriate promotional rewards, which may exert undue influence or coerce desirable outcomes.

Wikipedia then cites a 1994 NEJM article which describes some of the characteristics of a seeding trial

- The trial is of an intervention with many competitors

- Use of a trial design unlikely to achieve its stated scientific aims (e.g., un-blinded, no control group, no placebo)

- Recruitment of physicians as trial investigators because they commonly prescribe similar medical interventions rather than for their scientific merit

- Disproportionately high payments to trial investigators for relatively little work

- Sponsorship is from a company's sales or marketing budget rather than from research and development

- Little requirement for valid data collection

Discussion

1. The STEPS trial for Neurontin is very similar in scope and motivation to the earlier ADVANTAGE trial regarding Vioxx. Do a Google search and compare and contrast ADVANTAGE with STEPS.

2. Real clinical trials tend to have a few specialized centers with many patients per center. Seeding trials tend to have many untrained physicians with just a handful of patients per physician. According to Ben Goldacre, the STEPS trial

sent recruitment letters to 5,000 doctors inviting them to be investigators on the trial. 1,500 attended a briefing, with study information and promotional material on the drug. Doctors participating as "investigators" were only allowed to recruit a few patients each, with four on average at each site, all under the close supervision of company sales reps.

The investigators were poorly trained, with little or no experience of trials, and there was no auditing before the trial began. So the data was poor, but worse than that, drug sales reps were directly involved in collecting it, filling out study forms and handing out gifts as promotional rewards during data collection.

3. IRB stands for institutional review board:

An institutional review board (IRB), also known as an independent ethics committee (IEC) or ethical review board (ERB), is a committee that has been formally designated to approve, monitor, and review biomedical and behavioral research involving humans with the aim to protect the rights and welfare of the research subjects. In the United States, the Food and Drug Administration (FDA) and Department of Health and Human Services (specifically Office for Human Research Protections) regulations have empowered IRBs to approve, require modifications in planned research prior to approval, or disapprove research. An IRB performs critical oversight functions for research conducted on human subjects that are scientific, ethical, and regulatory.

Carl Elliott indicates that IRBs

don’t typically pass judgment on whether a study is being carried out merely to market a drug. Nor do most I.R.B.’s have the requisite expertise to do so. Even worse, many I.R.B.’s are now themselves for-profit businesses, paid directly by the sponsors of the studies they evaluate. If one I.R.B. gets a reputation for being too strict, a pharmaceutical company can simply go elsewhere for its review.

Last week, the federal government announced that it was overhauling its rules governing the protection of human subjects. But the new rules would not stop seeding trials. It is time to admit that I.R.B.’s are simply incapable of overseeing a global, multibillion-dollar corporate enterprise.

Joe Orton wrote a famous play in the 1960s, Loot, in which a home owner demands a warrant from the intruding police inspector; the inspector claims that since he is from the water board, no warrant is necessary. Similarly, even in the unlikely event that IRBs could be strengthened, a seeding trial organizer might paradoxically claim that any IRB has no jurisdiction because an IRB is set up to deal with real clinical trials, not bogus ones such as a seeding trial!

4. When you read an article in a newspaper or see a report on television, how do you know whether you are reading a genuine journalistic commentary vs. surreptitious advertizing?

Submitted by Paul Alper

Journal retractions on the rise

“Mistakes in Scientific Studies Surge”

by Gautam Naik, The Wall Street Journal, August 10, 2011

This article focuses on stories about retractions by Lancet, but it also provides charts of data about retractions in other science journals. It points out the potential harm done to patients whose doctors have to wait a year or more, in some cases, for these retractions.

See the first three charts[1]. Two are based on data about retractions categorized by scientific field and by journal, respectively, comparing the periods 2001-05 and 2006-10 (Source: Thomson Reuters). A third shows average lag time (months) between publication and retraction for articles in medicine and biology journals (Source: Journal of Medical Ethics, December 2010). Abstracts of JME articles on retractions are available here.

A fourth chart is also provided:

http://si.wsj.net/public/resources/images/P1-BB920A_RETRA_NS_20110809185403.jpg

Regarding one of the Lancet studies, the article says

As often happens, the original paper had inspired clinical research by others to test the dual therapy—studies that enrolled up to 36,000 patients, according to Dr. Steen, the analyst who did a study of retractions. "If there's a bad trial out there, there will be more flawed secondary trials, which put more patients at risk," he said.

Dr. Kunz in Switzerland said the Lancet and its peer reviewers ought to have been more skeptical about the overly positive results and should have caught the statistical anomaly she noticed. "Journals all want to have spectacular results," she said. "Increasingly, they're willing to publish more risky papers."

There are incentives on the other side, too. As the article quotes Lancet editor Richard Horton, "The stakes are so high. A single paper in Lancet and you get your chair and you get your money. It's your passport to success."

Submitted by Margaret Cibes

A penny for your thoughts

“Penny Auctions Draw Bidders With Bargains”

by Ann Zimmerman, The Wall Street Journal, August 17, 2011

Penny auctions were started online in the U.S. about 2.5 years ago and now number 165. However, watchdog organizations are receiving complaints ranging in content from incomplete disclosure of terms and conditions to allegations of fraud.

In a penny auction, bidders may win items at bargain prices, but bidders must pay the amount of their bids - plus a fee - even if they lose.

Although every bid raises the item price by just one penny, each bid costs between 60 cents and $1 to place, depending on the site. The sums add up fast: a Nikon D90 camera with a selling price on Beezid.com of 128 [dollars] actually sold for that price plus 12,800 bids costing 60 cents each, for a total of 7,808 [dollars].

Although the Washington state attorney general’s office does not believe that penny auctions are illegal gambling websites, one resident is suing the Quibids penny auction in federal court:

Quibids offers nothing more to its customers than a low probability of purchasing merchandise at a discount.

A Quibids executive takes the following position:

"Penny auctions are clearly not gambling … Winning an auction takes skill and that's an example of one of the many reasons it's not gambling. No customer has to lose money … because the site offers every bidder the chance to buy the item at retail, with their losing bids credited against the price.

Submitted by Margaret Cibes

Discussion

1. Do penny auctions seem like gambling to you?

2. What strategy, if any, would you consider a winning one?

3. Do you agree that “no customer has to lose money”?

Salmon and p-values

fMRI Scanning salmon - seriously

Neuroskeptic blog, 27 January 2011

As Charles Seife noted in the Scientific American article referenced above in Quotations

When they presented the fish with pictures of people expressing emotions, regions of the salmon’s brain lit up. The result was statistically significant with a p-value of less than 0.001; however, as the researchers argued, there are so many possible patterns that a statistically significant result was virtually guaranteed, so the result was totally worthless. p-value notwithstanding, there was no way that the fish could have reacted to human emotions. The salmon in the fMRI happened to be dead.

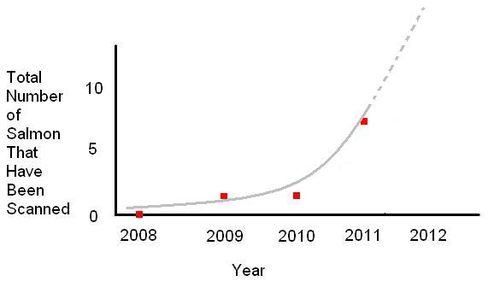

The present blog post attributes this finding to the multiple comparisons problem. It does, however, reference a real application of fMRI scanning of salmon, in an effort to understand their navigational prowess as they return to waterways where they were born in order to reproduce. Nevertheless, the author can't help ending with tongue-in-cheek graphic:

So fishMRI is clearly a fast-developing area of neuroscience. In fact, as this graph shows, it's enjoying exponential growth and, if current trends continue, could become almost as popular as scanning people...

Submitted by Paul Alper

Update

For more commentary, see Beware dead fish statistics on the Neuroskeptic blog, 26 November 2011

Paul Meier dies at 87

Paul Meier, statistician who revolutionized medical trials, dies at 87

by Dennis Hevesi, New York Times, 12 August 2011

This is Paul Meier of the famous Kaplan-Meier estimator in survival analysis. It is interesting to read here about Meier's important role as an early advocate for randomization techniques.

As early as the mid-1950s, Dr. Meier was one of the first and most vocal proponents of what is called “randomization.” Under the protocol, researchers randomly assign one group of patients to receive an experimental treatment and another to receive the standard treatment. In that way, the researchers try to avoid unintentionally skewing the results by choosing, for example, the healthier or younger patients to receive the new treatment.

Researchers were not always attuned to Dr. Meier’s advocacy. “When I said ‘randomize’ in breast cancer trials,” he recalled in a 2004 interview for Clinical Trials, the publication of the Society for Clinical Trials, “I was looked at with amazement by my medical colleagues: ‘Randomize? We know that this treatment is better than that one.’ I said, ‘Not really!’ ”

We are of course accustomed to hearing the name R.A. Fisher in this context. Meier's role in the U.S. is described in the article by Richard Peto of Oxford University, who wrote that Meier "perhaps more than any other U.S. statistician, was the one who influenced U.S. drug regulatory agencies, and hence clinical researchers throughout the U.S. and other countries, to insist on the central importance of randomized evidence.”

Submitted by Paul Alper

Outlier, anyone?

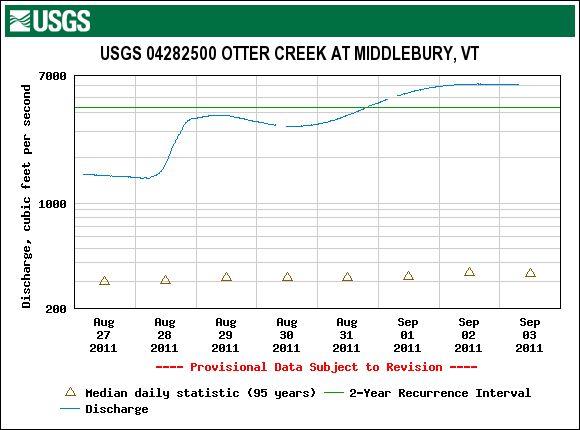

Middlebury was largely spared from the worst effects of Hurricane Irene, but the Otter Creek is now cresting from the runoff.

The U.S. Geological Survey presents the following summary statistics

for the mean daily discharge rate at Middlebury, in cubic feet per second, based on 95 years of records.

Daily discharge statistics, in cfs, for September 2

| Min (2002) |

25th percentile |

Median |

Mean |

75th percentile |

Max (1988) |

Most Recent Instantaneous Value Sep 2 |

|---|---|---|---|---|---|---|

| 124 | 221 | 324 | 439 | 500 | 1540 | 6140 |

You can see an indication of the approaching crest in the time series plot for the last week.

Discussion

You can read the USGS definitions of some of these terms here; in particular, note the discussion of discharge rate and recurrence interval. The above might seem to give two different impressions about how unusual the recent data are. The discharge rate appears as a large outlier among the 95 years summarized in the table, yet the graph gives a two-year recurrence interval for flows approaching this magnitude. How do you reconcile these?

Submitted by Jeanne Albert